Samsung knocked it out of the park at their “Unpacked” event with the announcements of Galaxy Z Flip and updated Galaxy+ Buds today. These are gorgeous, innovative products that should push mobile usage in an interesting direction. My first reaction is that if I had a Flip phone and in-ear headphones with mics, I would look at my screen a lot less - especially if my apps could talk! Spending less time looking at your phone was something Drew Austin talked about after he got AirPods in the AudioFirst podcast we highlighted yesterday. One of the main upgrades in Galaxy’s Buds is another microphone, moving from two mics to three. Combined with Bixby, these new Samsung offerings could take the lead in voice-first mobile experiences. Maybe we’re going to be wrong about Bixby IDE adoption after all.

Here’s everything Samsung just announced at Unpacked 2020 by Greg Kumparak for Techcrunch

Not to miss out on the foldable phone conversation today, Microsoft released the first emulator for Windows 10X today. How we design for mobile dual-screen devices with voice control are going to be the most challenges for designer and developers over the next few years - and we’re here for them.

A first look at Microsoft’s new Windows 10X operating system for dual screens by Tom Warren for The Verge

In other Microsoft news, our data science engineer Will Rice pointed us to the Microsoft Research blog for this article:

Turing-NLG: A 17-billion-parameter language model by Microsoft By Corby Rosset, Applied Scientist

Here’s what Will said:

“Large transformer-based language models have been leading advancements in NLP for the last few years and they keep getting bigger. Microsoft recently announced a 17-billion-parameter model named Turing-NLG. This is the largest language model ever trained and twice the size of Nvidia's MegatronLM the previous titleholder. The advantage of these large models is their effect on downstream NLP tasks. These models can be fine-tuned for specific tasks such as question answering or natural language understanding, which ultimately enable the wide array of features offered by chatbots and virtual assistants. The important take-home from this release is that improved (larger) language models will allow for better interactions with the devices that use natural language interfaces.”

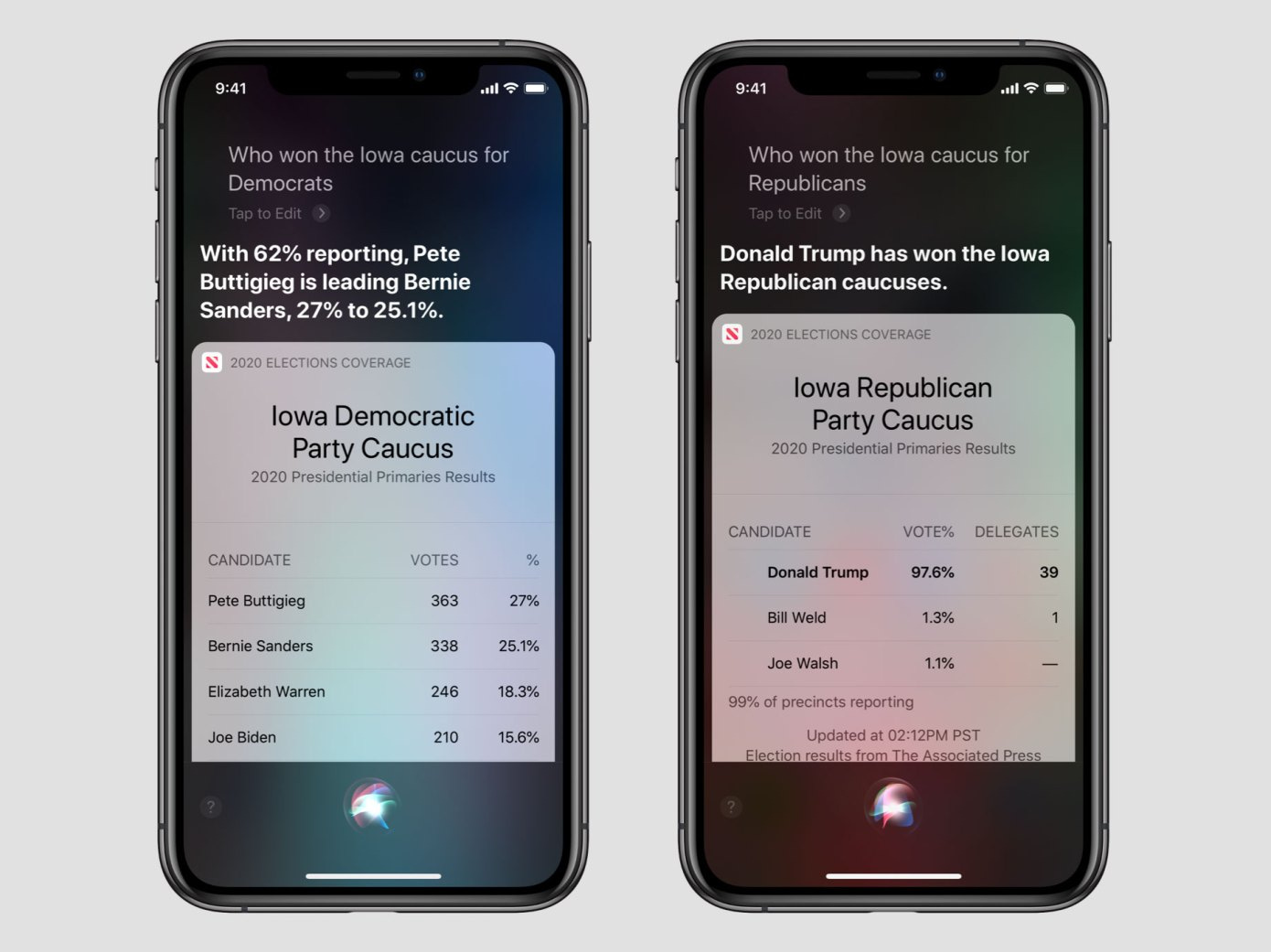

Siri will now answer your election questions by Sarah Perez for Techcruch

Some people want to give Apple a hard time about their efforts with voice/Siri, but I think they are making some smart moves and this is definitely one of them. As someone who spent most of my career in media, this would be somewhat worrisome if I ran a media brand. If Siri/Google/Bixby can just read AP data for elections, it is one less reason for the reader to open their phone and go to your app/website. We have a demo of a mobile app reading RSS feed headlines and decks of articles, and while it needs some polish, you can see the attraction of saying something like “Hey Siri, open Techcrunch” and getting the headlines while you’re in your car or on the go. As a media company, it’s got to be better than giving the platforms your data to read for you. Contact us if you want a demo!

As always… If you’re looking for help with a mobile voice for your brand or agency, please let us know!

Thanks again for being a part of the #voice community and connecting with Spokestack. We’re grateful to be a part of it. If you have any comments, please let us know.